Identifying and Measuring Requirements Quality

The Essential Guide to Requirements Management and Traceability

Chapters

- 1. Requirements Management

- Overview

- 1 What is Requirements Management? A Complete Guide

- 2 Why do you need Requirements Management?

- 3 Four Stages of Requirements Management Processes

- 4 Adopting an Agile Approach to Requirements Management

- 5 Status Request Changes

- 6 Conquering the 5 Biggest Challenges of Requirements Management

- 7 Three Reasons You Need a Requirements Management Solution

- 8 Guide to Poor Requirements: Identify Causes, Repercussions, and How to Fix Them

- 2. Writing Requirements

- Overview

- 1 Functional requirements examples and templates

- 2 What Is a Product Requirements Document? A Complete PRD Guide

- 3 What Is a User Requirement Specification (URS)? How to Write and Manage One

- 4 Identifying and Measuring Requirements Quality

- 5 How to Write a System Requirements Specification (SRS) Document

- 6 The Fundamentals of Business Requirements: Examples of Business Requirements and the Importance of Excellence

- 7 What Is a Compliance Risk Assessment? Steps, Framework, and Examples

- 8 Adopting the EARS Notation to Improve Requirements Engineering

- 9 Jama Connect Advisor™

- 10 Frequently Asked Questions about the EARS Notation and Jama Connect Advisor™

- 11 How to Write an Effective Product Requirements Document (PRD)

- 12 Functional vs. Non-Functional Requirements

- 13 What Are Nonfunctional Requirements and How Do They Impact Product Development?

- 14 What Is a Software Design Specification? Key Components + Template

- 15 Characteristics of Effective Software Requirements and Software Requirements Specifications (SRS)

- 16 8 Do’s and Don’ts for Writing Requirements

- 17 Project Requirements: Types, Process, and Best Practices

- 3. Requirements Gathering and Management Processes

- Overview

- 1 Requirements Engineering

- 2 Requirements Analysis

- 3 A Guide to Requirements Elicitation for Product Teams

- 4 Requirements Gathering Techniques for Agile Product Teams

- 5 Requirements Gathering in Software Engineering: Process, Techniques, and Best Practices

- 6 Defining and Implementing a Requirements Baseline

- 7 Managing Project Scope — Why It Matters and Best Practices

- 8 How Long Do Requirements Take?

- 9 How to Reuse Requirements Across Multiple Products

- 4. Requirements Traceability

- Overview

- 1 How is Traceability Achieved? A Practical Guide for Engineers

- 2 What is Requirements Traceability? Importance Explained

- 3 Tracing Your Way to Success: The Crucial Role of Traceability in Modern Product and Systems Development

- 4 Bidirectional Traceability: What It Is and How to Implement It

- 5 What is Engineering Change Management (ECM)? A Complete Guide

- 6 Change Impact Analysis (CIA): A Short Guide for Effective Implementation

- 7 What is Meant by Version Control?

- 8 What is Requirements Traceability and Why Does It Matter for Product Teams?

- 9 Key Traceability Challenges and Tips for Ensuring Accountability and Efficiency

- 10 The Role of a Data Thread in Product and Software Development

- 11 Unraveling the Digital Thread: Enhancing Connectivity and Efficiency

- 12 What is a Traceability Matrix? A Guide to Requirements Traceability

- 13 How to Create and Use a Requirements Traceability Matrix (RTM)

- 14 Traceability Matrix 101: Why It’s Not the Ultimate Solution for Managing Requirements

- 15 Live Traceability vs. After-the-Fact Traceability

- 16 Overcoming Barriers to Live Requirements Traceability™

- 17 Requirements Traceability, What Are You Missing?

- 18 Four Best Practices for Requirements Traceability

- 19 Requirements Traceability: Links in the Chain

- 20 What Are the Benefits of End-to-End Traceability During Product Development?

- 21 FAQs About Requirements Traceability

- 5. Requirements Management Tools and Software

- Overview

- 1 Selecting the Right Requirements Management Tools and Software

- 2 Why Investing in Requirements Management Software Makes Business Sense During an Economic Downturn

- 3 Why Word and Excel Alone is Not Enough for Product, Software, and Systems Development

- 4 Can You Track Requirements in Excel?

- 5 What Is Application Lifecycle Management (ALM)?

- 6 Is There Life After DOORS®?

- 7 Can You Track Requirements in Jira?

- 8 Checklist: Selecting a Requirements Management Tool

- 6. Requirements Validation and Verification

- 7. Meeting Regulatory Compliance and Industry Standards

- Overview

- 1 Understanding ISO Standards

- 2 Understanding ISO/IEC 27001: A Guide to Information Security Management

- 3 What is DevSecOps? A Guide to Building Secure Software

- 4 Compliance Management

- 5 What is FMEA? Failure Mode and Effects Analysis Guide

- 6 TÜV SÜD: Ensuring Safety, Quality, and Sustainability Worldwide

- 8. Systems Engineering

- Overview

- 1 What is Systems Engineering?

- 2 How Do Engineers Collaborate? A Guide to Streamlined Teamwork and Innovation

- 3 The Systems Engineering Body of Knowledge (SEBoK)

- 4 What is MBSE? Model-Based Systems Engineering Explained

- 5 Digital Engineering Between Government and Contractors

- 6 Digital Engineering Tools: The Key to Driving Innovation and Efficiency in Complex Systems

- 9. Automotive Development

- 10. Medical Device & Life Sciences Development

- Overview

- 1 The Importance of Benefit-Risk Analysis in Medical Device Development

- 2 Software as a Medical Device: Revolutionizing Healthcare

- 3 What’s a Design History File, and How Are DHFs Used by Product Teams?

- 4 Navigating the Risks of Software of Unknown Pedigree (SOUP) in the Medical Device & Life Sciences Industry

- 5 What is ISO 13485? Your Comprehensive Guide to Compliant Medical Device Manufacturing

- 6 What You Need to Know: ANSI/AAMI SW96:2023 — Medical Device Security

- 7 ISO 13485 vs ISO 9001: Understanding the Differences and Synergies

- 8 What Is IEC 62304? A Guide to Medical Device Software

- 9 What Is a Device Master Record (DMR)? Definition and FDA Requirements

- 10 Failure Modes, Effects, and Diagnostic Analysis (FMEDA) for Medical Devices: What You Need to Know

- 11 Embracing the Future of Healthcare: Exploring the Internet of Medical Things (IoMT)

- 12 What Is General Safety and Performance Requirements (GSPR)? What You Need To Know

- 13 What Is IEC 62366? A Guide to Medical Device Usability Engineering

- 11. Aerospace & Defense Development

- 12. Architecture, Engineering, and Construction (AEC industry) Development

- 13. Industrial Manufacturing & Machinery, Automation & Robotics, Consumer Electronics, and Energy

- 14. Semiconductor Development

- 15. AI in Product Development

- 16. Risk Management

- 17. Product Development Terms and Definitions

Chapter 2: Identifying and Measuring Requirements Quality

Chapters

- 1. Requirements Management

- Overview

- 1 What is Requirements Management? A Complete Guide

- 2 Why do you need Requirements Management?

- 3 Four Stages of Requirements Management Processes

- 4 Adopting an Agile Approach to Requirements Management

- 5 Status Request Changes

- 6 Conquering the 5 Biggest Challenges of Requirements Management

- 7 Three Reasons You Need a Requirements Management Solution

- 8 Guide to Poor Requirements: Identify Causes, Repercussions, and How to Fix Them

- 2. Writing Requirements

- Overview

- 1 Functional requirements examples and templates

- 2 What Is a Product Requirements Document? A Complete PRD Guide

- 3 What Is a User Requirement Specification (URS)? How to Write and Manage One

- 4 Identifying and Measuring Requirements Quality

- 5 How to Write a System Requirements Specification (SRS) Document

- 6 The Fundamentals of Business Requirements: Examples of Business Requirements and the Importance of Excellence

- 7 What Is a Compliance Risk Assessment? Steps, Framework, and Examples

- 8 Adopting the EARS Notation to Improve Requirements Engineering

- 9 Jama Connect Advisor™

- 10 Frequently Asked Questions about the EARS Notation and Jama Connect Advisor™

- 11 How to Write an Effective Product Requirements Document (PRD)

- 12 Functional vs. Non-Functional Requirements

- 13 What Are Nonfunctional Requirements and How Do They Impact Product Development?

- 14 What Is a Software Design Specification? Key Components + Template

- 15 Characteristics of Effective Software Requirements and Software Requirements Specifications (SRS)

- 16 8 Do’s and Don’ts for Writing Requirements

- 17 Project Requirements: Types, Process, and Best Practices

- 3. Requirements Gathering and Management Processes

- Overview

- 1 Requirements Engineering

- 2 Requirements Analysis

- 3 A Guide to Requirements Elicitation for Product Teams

- 4 Requirements Gathering Techniques for Agile Product Teams

- 5 Requirements Gathering in Software Engineering: Process, Techniques, and Best Practices

- 6 Defining and Implementing a Requirements Baseline

- 7 Managing Project Scope — Why It Matters and Best Practices

- 8 How Long Do Requirements Take?

- 9 How to Reuse Requirements Across Multiple Products

- 4. Requirements Traceability

- Overview

- 1 How is Traceability Achieved? A Practical Guide for Engineers

- 2 What is Requirements Traceability? Importance Explained

- 3 Tracing Your Way to Success: The Crucial Role of Traceability in Modern Product and Systems Development

- 4 Bidirectional Traceability: What It Is and How to Implement It

- 5 What is Engineering Change Management (ECM)? A Complete Guide

- 6 Change Impact Analysis (CIA): A Short Guide for Effective Implementation

- 7 What is Meant by Version Control?

- 8 What is Requirements Traceability and Why Does It Matter for Product Teams?

- 9 Key Traceability Challenges and Tips for Ensuring Accountability and Efficiency

- 10 The Role of a Data Thread in Product and Software Development

- 11 Unraveling the Digital Thread: Enhancing Connectivity and Efficiency

- 12 What is a Traceability Matrix? A Guide to Requirements Traceability

- 13 How to Create and Use a Requirements Traceability Matrix (RTM)

- 14 Traceability Matrix 101: Why It’s Not the Ultimate Solution for Managing Requirements

- 15 Live Traceability vs. After-the-Fact Traceability

- 16 Overcoming Barriers to Live Requirements Traceability™

- 17 Requirements Traceability, What Are You Missing?

- 18 Four Best Practices for Requirements Traceability

- 19 Requirements Traceability: Links in the Chain

- 20 What Are the Benefits of End-to-End Traceability During Product Development?

- 21 FAQs About Requirements Traceability

- 5. Requirements Management Tools and Software

- Overview

- 1 Selecting the Right Requirements Management Tools and Software

- 2 Why Investing in Requirements Management Software Makes Business Sense During an Economic Downturn

- 3 Why Word and Excel Alone is Not Enough for Product, Software, and Systems Development

- 4 Can You Track Requirements in Excel?

- 5 What Is Application Lifecycle Management (ALM)?

- 6 Is There Life After DOORS®?

- 7 Can You Track Requirements in Jira?

- 8 Checklist: Selecting a Requirements Management Tool

- 6. Requirements Validation and Verification

- 7. Meeting Regulatory Compliance and Industry Standards

- Overview

- 1 Understanding ISO Standards

- 2 Understanding ISO/IEC 27001: A Guide to Information Security Management

- 3 What is DevSecOps? A Guide to Building Secure Software

- 4 Compliance Management

- 5 What is FMEA? Failure Mode and Effects Analysis Guide

- 6 TÜV SÜD: Ensuring Safety, Quality, and Sustainability Worldwide

- 8. Systems Engineering

- Overview

- 1 What is Systems Engineering?

- 2 How Do Engineers Collaborate? A Guide to Streamlined Teamwork and Innovation

- 3 The Systems Engineering Body of Knowledge (SEBoK)

- 4 What is MBSE? Model-Based Systems Engineering Explained

- 5 Digital Engineering Between Government and Contractors

- 6 Digital Engineering Tools: The Key to Driving Innovation and Efficiency in Complex Systems

- 9. Automotive Development

- 10. Medical Device & Life Sciences Development

- Overview

- 1 The Importance of Benefit-Risk Analysis in Medical Device Development

- 2 Software as a Medical Device: Revolutionizing Healthcare

- 3 What’s a Design History File, and How Are DHFs Used by Product Teams?

- 4 Navigating the Risks of Software of Unknown Pedigree (SOUP) in the Medical Device & Life Sciences Industry

- 5 What is ISO 13485? Your Comprehensive Guide to Compliant Medical Device Manufacturing

- 6 What You Need to Know: ANSI/AAMI SW96:2023 — Medical Device Security

- 7 ISO 13485 vs ISO 9001: Understanding the Differences and Synergies

- 8 What Is IEC 62304? A Guide to Medical Device Software

- 9 What Is a Device Master Record (DMR)? Definition and FDA Requirements

- 10 Failure Modes, Effects, and Diagnostic Analysis (FMEDA) for Medical Devices: What You Need to Know

- 11 Embracing the Future of Healthcare: Exploring the Internet of Medical Things (IoMT)

- 12 What Is General Safety and Performance Requirements (GSPR)? What You Need To Know

- 13 What Is IEC 62366? A Guide to Medical Device Usability Engineering

- 11. Aerospace & Defense Development

- 12. Architecture, Engineering, and Construction (AEC industry) Development

- 13. Industrial Manufacturing & Machinery, Automation & Robotics, Consumer Electronics, and Energy

- 14. Semiconductor Development

- 15. AI in Product Development

- 16. Risk Management

- 17. Product Development Terms and Definitions

Identifying and Measuring the Quality of Requirements

Jama Software has partnered with Karl Wiegers to share licensed content from his books and articles. Karl Wiegers is an independent consultant and not an employee of Jama Software. He can be reached at ProcessImpact.com.

In this chapter, we discuss accurately measuring requirements and why disciplined organizations collect a focused set of metrics about all projects.

These metrics provide insight into the size of the product; the effort, time, and money that the project or individual tasks consumed; the project status; and the product’s quality. Because requirements are an essential project component, you should measure several aspects of your requirements engineering activities. In this chapter, adapted from Karl Wiegers book, More about Software Requirements, we cover several meaningful metrics related to requirements activities on your projects.

Product Size

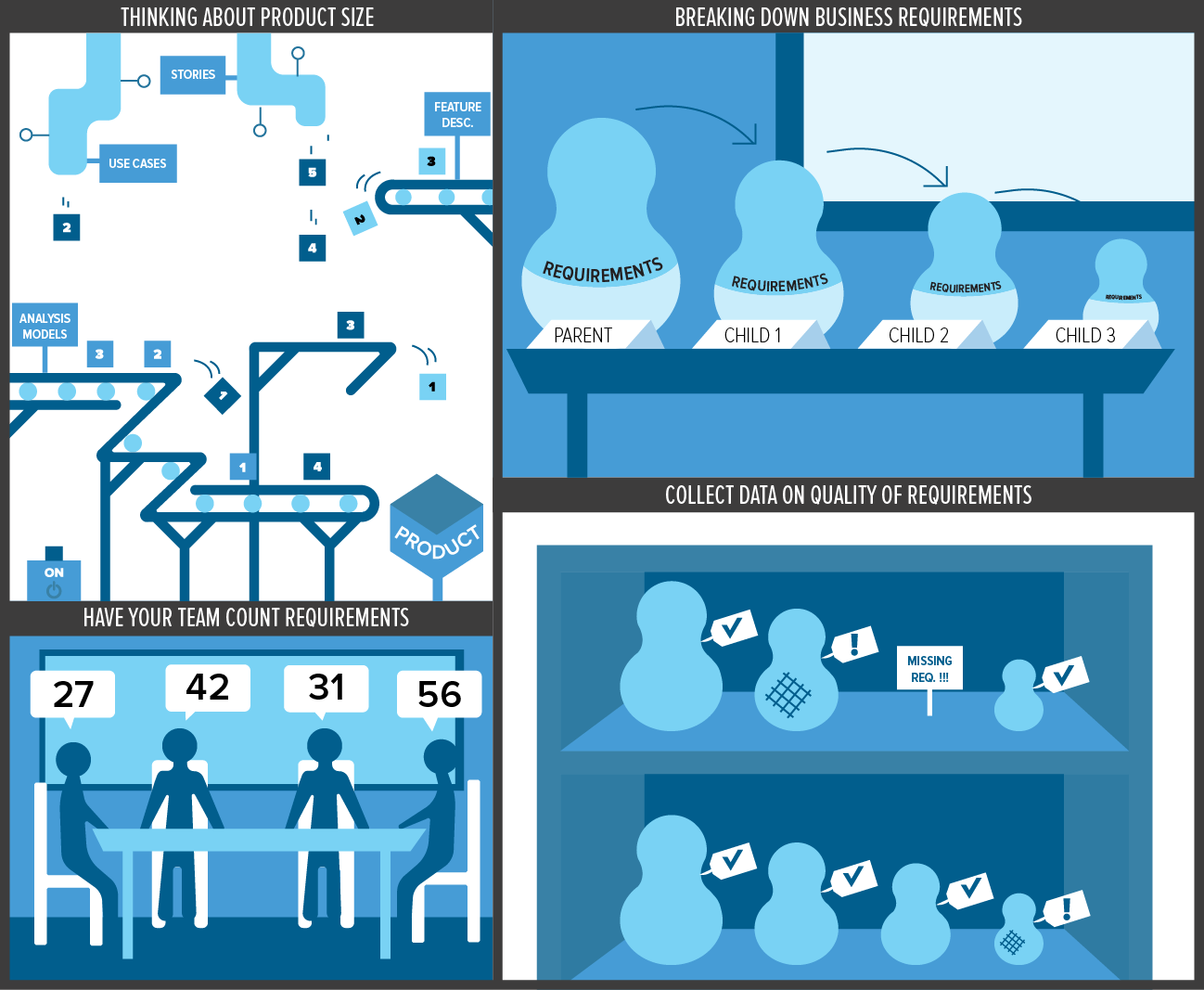

The most fundamental metric is the number of requirements in a body of work. Your project might represent requirements by using a mix of use cases, functional requirements, user stories, feature descriptions, event-response tables, and analysis models. However, the team ultimately implements functional requirements, descriptions of how the system should behave under specific conditions.

Begin your requirements measurement by simply counting the individual functional requirements that are allocated to the baseline for a given product release or development iteration. If different team members can’t count the requirements and get the same answer, you have to wonder what other sorts of ambiguities and misunderstandings they’ll experience. Knowing how many requirements are going into a release will help you judge how the team is progressing toward completion because you can monitor the backlog of work remaining to be done. If you don’t know how many requirements you need to work on, how will you know when you’re done?

Of course, not all functional requirements will consume the same implementation and testing effort. If you’re going to count functional requirements as an indicator of system size, your analysts will need to write them at a consistent level of granularity. One guideline is to decompose high-level requirements until the child requirements are all individually testable. That is, a tester can design a few logically related tests to verify whether a requirement was correctly implemented. Count the total number of child requirements, because those are what developers will implement and testers will test. Alternative requirements sizing techniques include use case points and story points. All of these methods involve estimating the relative effort to implement a defined chunk of functionality.

Functional requirements aren’t the whole story, of course. Stringent nonfunctional requirements can consume considerable design and implementation effort. Some functionality is derived from specified nonfunctional requirements, such as security requirements, so those would be incorporated appropriately into the functional requirement size estimate. But not all nonfunctional requirements will be reflected in this size estimate. Be sure to consider the impact of nonfunctional requirements upon your effort estimate. Consider the following situations:

- If the user must have multiple ways to access specific functions to improve usability, it will take more development effort than if only one access mechanism is needed.

- Imposed design and implementation constraints, such as multiple external interfaces to achieve compatibility with an existing operating environment, can lead to a lot of interface work even though you aren’t providing additional new product functionality.

- Strict performance requirements might demand extensive algorithm and database design work to optimize response times.

- Rigorous availability and reliability requirements can imply significant work to build in failover and data recovery mechanisms, as well as having implications for the system architecture you select.

You’ll also find it informative to track the growth in requirements as a function of time, no matter what requirements size metric you use. One of my clients found that their projects typically grew in size by about twenty-five percent before delivery. Amazingly, they also ran about twenty-five percent over the planned schedule on most of their projects. Coincidence? I think not.

Requirements Quality

Consider collecting some data regarding the quality of your requirements. Inspections of requirements specifications are a good source of this information. Count the requirements defects you discover and classify them into various categories: missing requirements, erroneous requirements, unnecessary requirements, incompleteness, ambiguities, and so forth. Use defect type frequencies and root-cause analysis to tune up your requirements processes so the team makes fewer of these types of errors in the future. For instance, if you find that missing requirements are a common problem, your elicitation approaches need some adjustments. Perhaps your business analysts aren’t asking enough questions or the right questions, or maybe you need to engage more appropriate user representatives in the requirements development process.

If the team members don’t think they have time to inspect all their requirements documentation, try inspecting a sample of just a few pages. Then calculate the average defect density—the number of defects found per specification page—for the sample. Assuming that the sample was representative of the entire document (a big assumption), you can multiply the number of uninspected pages by this defect density to estimate the number of undiscovered defects that could still lurk in the specification. Less experienced inspectors might discover only, say, half the defects that actually are present, so use this estimated number of undiscovered defects as a lower bound. Inspection sampling can let you assess the document’s quality so that you can determine whether it’s cost effective to inspect the rest of the requirements specification. The answer will almost certainly be yes.

Also, keep records of requirements defects that are identified after the requirements are baselined, such as requirements-related problems discovered during design, coding, and testing. These represent errors that leaked through your quality control filters during requirements development. Calculate the percentage of the total number of requirements errors that the team caught at the requirements stage. Removing requirements defects early is far cheaper than correcting them after the team has already designed, coded, and tested the wrong requirements.

Two informative metrics to calculate from inspection data are efficiency and effectiveness. Efficiency refers to the average number of defects discovered per labor hour of inspection effort. Effectiveness refers to the percentage of the defects originally present in a work product that was discovered by inspection. Effectiveness will tell you how well your inspections (or other requirements quality techniques) are working. Efficiency will tell you what it costs you, on average, to discover a defect through inspection. You can compare that cost with the cost of dealing with requirements defects found later in the project or after delivery to judge whether improving the quality of your requirements is cost effective.

The second article in this series will address metrics related to requirements status, change requests, and the effort expended on requirements development and management activities.

RELATED ARTICLE: Product Size Requirements Quality

Requirements Status

Track the status of each requirement over time to monitor overall project status, perhaps defining a requirement attribute to store this information. Status tracking can help you avoid the pervasive “ninety percent done” problem of software project tracking. Each requirement will have one of the following statuses at any time.

- Proposed (someone suggested it)

- Approved (it was allocated to a baseline)

- Implemented (the code was designed, written, and unit tested)

- Verified (the requirement passed its tests after integration into the product)

- Deferred (the requirement will be implemented in a future release)

- Deleted (you decided not to implement it at all)

- Rejected (the idea was never approved)

Other status options are possible, of course. Some organizations use a status of “Reviewed” because they want to confirm that a requirement is of high quality before allocating it to a baseline. Other organizations use “Delivered to Customer” to indicate that a requirement has actually been released.

When you ask a developer how he is coming along, he might say, “Of the eighty-seven requirements allocated to this subsystem, sixty-one of them are verified, nine are implemented but not yet verified, and seventeen aren’t yet completely implemented.” There’s a good chance that not all these requirements are the same size, will consume the same amount of implementation effort, or will deliver the same customer value. If I were a project manager, though, I’d feel that we had a good handle on the size of that subsystem and how close we were to completion. This is far more informative than, “I’m about ninety percent done. Lookin’ good!”

Requests for Changes

Much of requirements management involves handling requirement additions, modifications, and deletions. Therefore, track the status and impact of your requirements change requests. The data you collect should let your team answer questions such as the following:

- How many change requests were submitted in a given time period?

- How many of these requests are open, and how many are closed?

- How many requests were approved, and how many were rejected?

- How much effort did the team spend implementing each approved change?

- How long have the requests been open on average?

- On average, how many individual requirements or other artifacts are affected by each submitted change request?

Monitor how many changes are incorporated throughout development after you baselined the requirements for a specific release. Note that a single change request potentially can affect multiple requirements of different levels and types (user requirements, functional requirements, nonfunctional requirements). To calculate requirements volatility over a given time period, divide the number of changes by the total number of requirements at the beginning of the period (for example, at the time a baseline was defined):

The intent is not to try to eliminate requirements volatility. There are often good reasons to change requirements. However, we need to ensure that the project can manage the degree of requirements changes and still meet its commitments. Changes become more expensive as the product nears completion, and a sustained high level of approved change requests makes it difficult to know when you can ship the product. Most projects should become more resistant to making changes as development progresses, meaning the trend of accepted changes should approach zero as you near the planned completion date for a given release. An iterative development approach gives the team multiple opportunities to incorporate changes into subsequent iterations, while still keeping each iteration on schedule.

If you receive many change requests, that suggests that elicitation overlooked many requirements or that new ideas keep coming in as the project drags along month after month. Record where the change requests come from: marketing, users, sales, management, developers, and so on. The change request origins will tell you who to work with to reduce the number of overlooked, modified, and misunderstood requirements.

Change requests that remain unresolved for a long time suggest that your change management process isn’t working well. I once visited a company where a manager wryly admitted that they had enhancement requests that were several years old and still pending. This team should allocate a certain number of their open requests to specific planned maintenance releases and convert other long-term deferred change requests to a status of rejected. This would help the project manager focus the team’s energy on the most important and most urgent items in the change backlog.

RELATED ARTICLE: Status Request Changes

Effort

Finally, we recommend that you record the time your team spends on requirements engineering activities. These activities include both requirements development (getting and writing good requirements) and requirements management (dealing with change, tracking status, recording traceability data, and so on).

I’m frequently asked how much time and effort a project should allocate to these functions. The answer depends enormously on the type and size of project, the developing team and organization, and the application domain. If you track your own team’s investment in these critical project activities, you can better estimate how much effort to plan for future projects.

Suppose that on one previous project, your team expended ten percent of its effort on requirements activities. In retrospect, you conclude that the requirements were too poorly defined and the project would have benefited from additional investment in developing quality requirements. The next time your team tackles a similar project, the project manager would be wise to allocate more than ten percent of the total project effort to the requirements work.

As you accumulate data, correlate the project development effort with some measure of product size. The documented requirements should give you an indication of size. You could correlate effort with the count of individually testable requirements, use case points, function points, or something else that is proportional to product size. As Figure 1 illustrates, such correlations provide a measure of your development team’s productivity, which will help you estimate and scope individual release contents. If you collect some product size data and track the corresponding implementation effort, you’ll be in a better position to create meaningful estimates for similar projects in the future.

Figure 1. Correlating requirements size with project effort gives a measure of team productivity. Each point represents a separate project.

Some people are afraid that launching a software measurement effort will consume too much time, time they feel should be spent doing “real work.” My experience, though, is that a sensible and focused metrics program doesn’t take much time or effort at all. It’s mostly a matter of developing a simple infrastructure for collecting and analyzing the data, and getting team members in the habit of recording some key bits of data about their work. Once you’ve developed a measurement culture in your organization, you’ll be surprised how much you can learn from the data.

Jama Software has partnered with Karl Wiegers to share licensed content from his books and articles on our web site via a series of blog posts, whitepapers and webinars. Karl Wiegers is an independent consultant and not an employee of Jama. He can be reached at http://www.processimpact.com. Enjoy these free requirements management resources.

Product Size: The most fundamental metric is the number of requirements in a body of work. Your project might represent requirements by using a mix of use cases, functional requirements, user stories, feature descriptions, event-response tables, and analysis models. However, the team ultimately implements functional requirements, descriptions of how the system should behave under specific conditions.

Book a Demo

See Jama Connect in Action!

Our Jama Connect experts are ready to guide you through a personalized demo, answer your questions, and show you how Jama Connect can help you identify risks, improve cross-team collaboration, and drive faster time to market.