What Is System Integration Testing (SIT)? A Complete Guide

The Essential Guide to Requirements Management and Traceability

Chapters

- 1. Requirements Management

- Overview

- 1 What is Requirements Management? A Complete Guide

- 2 Why do you need Requirements Management?

- 3 Four Stages of Requirements Management Processes

- 4 Adopting an Agile Approach to Requirements Management

- 5 Status Request Changes

- 6 Conquering the 5 Biggest Challenges of Requirements Management

- 7 Three Reasons You Need a Requirements Management Solution

- 8 Guide to Poor Requirements: Identify Causes, Repercussions, and How to Fix Them

- 2. Writing Requirements

- Overview

- 1 Functional requirements examples and templates

- 2 Identifying and Measuring Requirements Quality

- 3 How to Write a System Requirements Specification (SRS) Document

- 4 The Fundamentals of Business Requirements: Examples of Business Requirements and the Importance of Excellence

- 5 Adopting the EARS Notation to Improve Requirements Engineering

- 6 Jama Connect Advisor™

- 7 Frequently Asked Questions about the EARS Notation and Jama Connect Advisor™

- 8 How to Write an Effective Product Requirements Document (PRD)

- 9 Functional vs. Non-Functional Requirements

- 10 What Are Nonfunctional Requirements and How Do They Impact Product Development?

- 11 Characteristics of Effective Software Requirements and Software Requirements Specifications (SRS)

- 12 What Is a Software Design Specification? Key Components + Template

- 13 8 Do’s and Don’ts for Writing Requirements

- 3. Requirements Gathering and Management Processes

- Overview

- 1 Requirements Engineering

- 2 Requirements Analysis

- 3 A Guide to Requirements Elicitation for Product Teams

- 4 Requirements Gathering Techniques for Agile Product Teams

- 5 Requirements Gathering in Software Engineering: Process, Techniques, and Best Practices

- 6 Defining and Implementing a Requirements Baseline

- 7 Managing Project Scope — Why It Matters and Best Practices

- 8 How Long Do Requirements Take?

- 9 How to Reuse Requirements Across Multiple Products

- 4. Requirements Traceability

- Overview

- 1 How is Traceability Achieved? A Practical Guide for Engineers

- 2 What is Requirements Traceability? Importance Explained

- 3 Tracing Your Way to Success: The Crucial Role of Traceability in Modern Product and Systems Development

- 4 Bidirectional Traceability: What It Is and How to Implement It

- 5 What is Engineering Change Management (ECM)? A Complete Guide

- 6 Change Impact Analysis (CIA): A Short Guide for Effective Implementation

- 7 What is Meant by Version Control?

- 8 What is Requirements Traceability and Why Does It Matter for Product Teams?

- 9 Key Traceability Challenges and Tips for Ensuring Accountability and Efficiency

- 10 The Role of a Data Thread in Product and Software Development

- 11 Unraveling the Digital Thread: Enhancing Connectivity and Efficiency

- 12 What is a Traceability Matrix? A Guide to Requirements Traceability

- 13 How to Create and Use a Requirements Traceability Matrix (RTM)

- 14 Traceability Matrix 101: Why It’s Not the Ultimate Solution for Managing Requirements

- 15 Live Traceability vs. After-the-Fact Traceability

- 16 Overcoming Barriers to Live Requirements Traceability™

- 17 Requirements Traceability, What Are You Missing?

- 18 Four Best Practices for Requirements Traceability

- 19 Requirements Traceability: Links in the Chain

- 20 What Are the Benefits of End-to-End Traceability During Product Development?

- 21 FAQs About Requirements Traceability

- 5. Requirements Management Tools and Software

- Overview

- 1 Selecting the Right Requirements Management Tools and Software

- 2 Why Investing in Requirements Management Software Makes Business Sense During an Economic Downturn

- 3 Why Word and Excel Alone is Not Enough for Product, Software, and Systems Development

- 4 Can You Track Requirements in Excel?

- 5 What Is Application Lifecycle Management (ALM)?

- 6 Is There Life After DOORS®?

- 7 Can You Track Requirements in Jira?

- 8 Checklist: Selecting a Requirements Management Tool

- 6. Requirements Validation and Verification

- 7. Meeting Regulatory Compliance and Industry Standards

- Overview

- 1 Understanding ISO Standards

- 2 Understanding ISO/IEC 27001: A Guide to Information Security Management

- 3 What is DevSecOps? A Guide to Building Secure Software

- 4 Compliance Management

- 5 What is FMEA? Failure Mode and Effects Analysis Guide

- 6 TÜV SÜD: Ensuring Safety, Quality, and Sustainability Worldwide

- 8. Systems Engineering

- Overview

- 1 What is Systems Engineering?

- 2 How Do Engineers Collaborate? A Guide to Streamlined Teamwork and Innovation

- 3 The Systems Engineering Body of Knowledge (SEBoK)

- 4 What is MBSE? Model-Based Systems Engineering Explained

- 5 Digital Engineering Between Government and Contractors

- 6 Digital Engineering Tools: The Key to Driving Innovation and Efficiency in Complex Systems

- 9. Automotive Development

- 10. Medical Device & Life Sciences Development

- Overview

- 1 The Importance of Benefit-Risk Analysis in Medical Device Development

- 2 Software as a Medical Device: Revolutionizing Healthcare

- 3 What’s a Design History File, and How Are DHFs Used by Product Teams?

- 4 Navigating the Risks of Software of Unknown Pedigree (SOUP) in the Medical Device & Life Sciences Industry

- 5 What is ISO 13485? Your Comprehensive Guide to Compliant Medical Device Manufacturing

- 6 What You Need to Know: ANSI/AAMI SW96:2023 — Medical Device Security

- 7 ISO 13485 vs ISO 9001: Understanding the Differences and Synergies

- 8 What Is IEC 62304? A Guide to Medical Device Software

- 9 Failure Modes, Effects, and Diagnostic Analysis (FMEDA) for Medical Devices: What You Need to Know

- 10 Embracing the Future of Healthcare: Exploring the Internet of Medical Things (IoMT)

- 11 What Is General Safety and Performance Requirements (GSPR)? What You Need To Know

- 11. Aerospace & Defense Development

- 12. Architecture, Engineering, and Construction (AEC industry) Development

- 13. Industrial Manufacturing & Machinery, Automation & Robotics, Consumer Electronics, and Energy

- 14. Semiconductor Development

- 15. AI in Product Development

- 16. Risk Management

- 17. Product Development Terms and Definitions

Chapter 6: What Is System Integration Testing (SIT)? A Complete Guide

Chapters

- 1. Requirements Management

- Overview

- 1 What is Requirements Management? A Complete Guide

- 2 Why do you need Requirements Management?

- 3 Four Stages of Requirements Management Processes

- 4 Adopting an Agile Approach to Requirements Management

- 5 Status Request Changes

- 6 Conquering the 5 Biggest Challenges of Requirements Management

- 7 Three Reasons You Need a Requirements Management Solution

- 8 Guide to Poor Requirements: Identify Causes, Repercussions, and How to Fix Them

- 2. Writing Requirements

- Overview

- 1 Functional requirements examples and templates

- 2 Identifying and Measuring Requirements Quality

- 3 How to Write a System Requirements Specification (SRS) Document

- 4 The Fundamentals of Business Requirements: Examples of Business Requirements and the Importance of Excellence

- 5 Adopting the EARS Notation to Improve Requirements Engineering

- 6 Jama Connect Advisor™

- 7 Frequently Asked Questions about the EARS Notation and Jama Connect Advisor™

- 8 How to Write an Effective Product Requirements Document (PRD)

- 9 Functional vs. Non-Functional Requirements

- 10 What Are Nonfunctional Requirements and How Do They Impact Product Development?

- 11 Characteristics of Effective Software Requirements and Software Requirements Specifications (SRS)

- 12 What Is a Software Design Specification? Key Components + Template

- 13 8 Do’s and Don’ts for Writing Requirements

- 3. Requirements Gathering and Management Processes

- Overview

- 1 Requirements Engineering

- 2 Requirements Analysis

- 3 A Guide to Requirements Elicitation for Product Teams

- 4 Requirements Gathering Techniques for Agile Product Teams

- 5 Requirements Gathering in Software Engineering: Process, Techniques, and Best Practices

- 6 Defining and Implementing a Requirements Baseline

- 7 Managing Project Scope — Why It Matters and Best Practices

- 8 How Long Do Requirements Take?

- 9 How to Reuse Requirements Across Multiple Products

- 4. Requirements Traceability

- Overview

- 1 How is Traceability Achieved? A Practical Guide for Engineers

- 2 What is Requirements Traceability? Importance Explained

- 3 Tracing Your Way to Success: The Crucial Role of Traceability in Modern Product and Systems Development

- 4 Bidirectional Traceability: What It Is and How to Implement It

- 5 What is Engineering Change Management (ECM)? A Complete Guide

- 6 Change Impact Analysis (CIA): A Short Guide for Effective Implementation

- 7 What is Meant by Version Control?

- 8 What is Requirements Traceability and Why Does It Matter for Product Teams?

- 9 Key Traceability Challenges and Tips for Ensuring Accountability and Efficiency

- 10 The Role of a Data Thread in Product and Software Development

- 11 Unraveling the Digital Thread: Enhancing Connectivity and Efficiency

- 12 What is a Traceability Matrix? A Guide to Requirements Traceability

- 13 How to Create and Use a Requirements Traceability Matrix (RTM)

- 14 Traceability Matrix 101: Why It’s Not the Ultimate Solution for Managing Requirements

- 15 Live Traceability vs. After-the-Fact Traceability

- 16 Overcoming Barriers to Live Requirements Traceability™

- 17 Requirements Traceability, What Are You Missing?

- 18 Four Best Practices for Requirements Traceability

- 19 Requirements Traceability: Links in the Chain

- 20 What Are the Benefits of End-to-End Traceability During Product Development?

- 21 FAQs About Requirements Traceability

- 5. Requirements Management Tools and Software

- Overview

- 1 Selecting the Right Requirements Management Tools and Software

- 2 Why Investing in Requirements Management Software Makes Business Sense During an Economic Downturn

- 3 Why Word and Excel Alone is Not Enough for Product, Software, and Systems Development

- 4 Can You Track Requirements in Excel?

- 5 What Is Application Lifecycle Management (ALM)?

- 6 Is There Life After DOORS®?

- 7 Can You Track Requirements in Jira?

- 8 Checklist: Selecting a Requirements Management Tool

- 6. Requirements Validation and Verification

- 7. Meeting Regulatory Compliance and Industry Standards

- Overview

- 1 Understanding ISO Standards

- 2 Understanding ISO/IEC 27001: A Guide to Information Security Management

- 3 What is DevSecOps? A Guide to Building Secure Software

- 4 Compliance Management

- 5 What is FMEA? Failure Mode and Effects Analysis Guide

- 6 TÜV SÜD: Ensuring Safety, Quality, and Sustainability Worldwide

- 8. Systems Engineering

- Overview

- 1 What is Systems Engineering?

- 2 How Do Engineers Collaborate? A Guide to Streamlined Teamwork and Innovation

- 3 The Systems Engineering Body of Knowledge (SEBoK)

- 4 What is MBSE? Model-Based Systems Engineering Explained

- 5 Digital Engineering Between Government and Contractors

- 6 Digital Engineering Tools: The Key to Driving Innovation and Efficiency in Complex Systems

- 9. Automotive Development

- 10. Medical Device & Life Sciences Development

- Overview

- 1 The Importance of Benefit-Risk Analysis in Medical Device Development

- 2 Software as a Medical Device: Revolutionizing Healthcare

- 3 What’s a Design History File, and How Are DHFs Used by Product Teams?

- 4 Navigating the Risks of Software of Unknown Pedigree (SOUP) in the Medical Device & Life Sciences Industry

- 5 What is ISO 13485? Your Comprehensive Guide to Compliant Medical Device Manufacturing

- 6 What You Need to Know: ANSI/AAMI SW96:2023 — Medical Device Security

- 7 ISO 13485 vs ISO 9001: Understanding the Differences and Synergies

- 8 What Is IEC 62304? A Guide to Medical Device Software

- 9 Failure Modes, Effects, and Diagnostic Analysis (FMEDA) for Medical Devices: What You Need to Know

- 10 Embracing the Future of Healthcare: Exploring the Internet of Medical Things (IoMT)

- 11 What Is General Safety and Performance Requirements (GSPR)? What You Need To Know

- 11. Aerospace & Defense Development

- 12. Architecture, Engineering, and Construction (AEC industry) Development

- 13. Industrial Manufacturing & Machinery, Automation & Robotics, Consumer Electronics, and Energy

- 14. Semiconductor Development

- 15. AI in Product Development

- 16. Risk Management

- 17. Product Development Terms and Definitions

What Is System Integration Testing (SIT)? A Complete Guide

A product can pass every internal test and still fail the moment it connects to an external system. That failure is expensive to fix late in the cycle, and in regulated industries, it can delay certification timelines by weeks. System integration testing (SIT) is what catches those failures early, when the fix is still straightforward and the evidence trail is still clean.

This guide covers what SIT is, how it differs from other testing types, the main approaches teams use, and how to run it well in regulated programs.

What Is System Integration Testing (SIT)?

System integration testing (SIT) is a testing level that verifies independently developed systems and external services communicate correctly when connected. Where component integration testing checks interfaces within a single system, SIT operates at the system level and confirms that a fully built product exchanges data with external systems as specified in its interface control documents.

The International Software Testing Qualifications Board (ISTQB) v4.0 foundation syllabus defines five test levels: component testing, component integration testing, system testing, system integration testing, and acceptance testing. Each level has its own scope and exit criteria. SIT sits at the fourth level, verifying the system’s external interfaces within the broader operational environment after the system has already been internally validated.

Why SIT Is Worth Getting Right

SIT occupies a position in the development cycle where defects become expensive to fix. A requirements-phase defect caught at integration testing costs significantly more to resolve than the same defect caught during authoring, because errors introduced early propagate forward into design, implementation, and test artifacts. Unraveling an integration failure often requires changes across multiple teams and subsystems that have already been independently verified.

For teams in regulated industries, the stakes go beyond rework cost. Regulatory frameworks like DO-178C, IEC 62304, and ISO 26262 require verification activities to be documented and traced. SIT is often part of that verification, which means the testing process itself needs to produce auditable evidence. One analysis of software-related medical device recalls found that 627 devices comprising 1.4 million units were affected, showing how integration failures in regulated products carry consequences well beyond the engineering team.

How SIT Differs From Other Testing Types

Understanding where SIT fits relative to other testing levels helps clarify what it covers and what it does not.

SIT vs. Component Integration Testing

Component integration testing verifies interfaces between modules within one system. SIT verifies interfaces between the system under test and external systems or services. A flight management computer’s navigation module exchanging data with its internal display module is component integration testing. That same avionics system exchanging data with a separate air traffic control system is SIT.

SIT vs. System Testing

System testing validates the complete system’s behavior against its own requirements, including non-functional aspects like performance and security. SIT then verifies that the system’s external interfaces work correctly in a realistic environment. The ISTQB v4.0 syllabus recommends the SIT environment be similar to the operational environment, because interface defects are hard to detect when external systems are simulated.

SIT vs. User Acceptance Testing (UAT)

SIT is a verification exercise focused on whether inter-system interfaces match technical specifications. User Acceptance Testing (UAT) is a validation activity focused on whether the integrated product meets user and business needs. Test engineers perform SIT using interface control documents, while intended users or their representatives perform UAT using business processes. Both are needed, and neither replaces the other.

Four Common Approaches to SIT

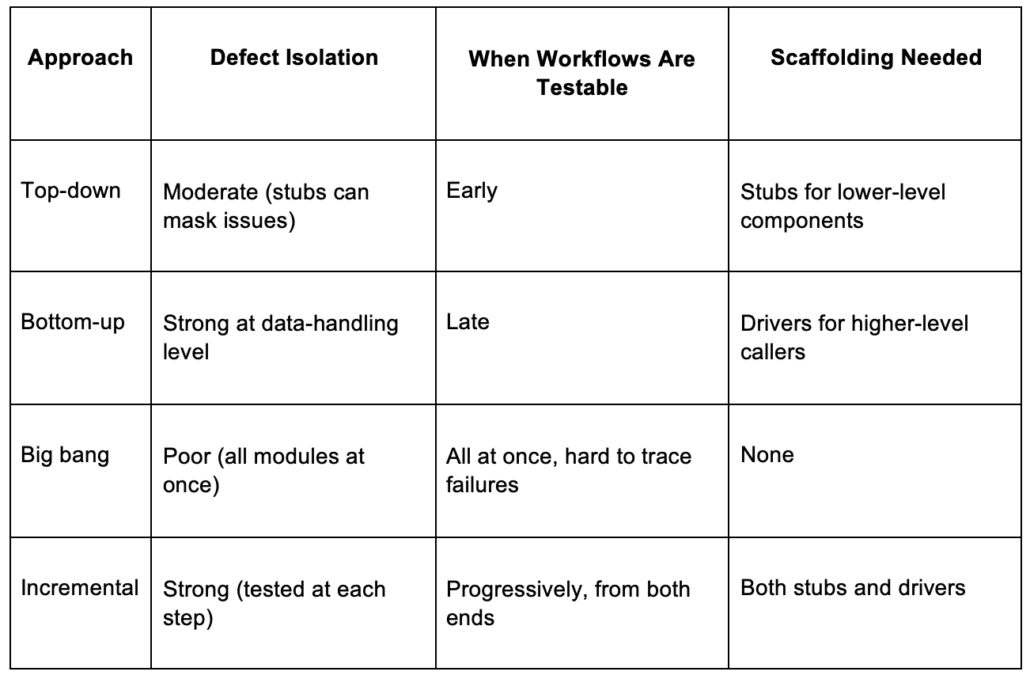

Each approach involves tradeoffs between defect isolation, schedule flexibility, and the overhead of building test scaffolding.

Top-Down Testing

Testing proceeds from the highest-level modules downward, using stubs to simulate lower-level components not yet integrated. This validates high-level workflows early, but oversimplified stubs can mask defects that only real components would reveal.

Bottom-Up Testing

Integration starts at the foundational layer, with drivers simulating higher-level callers that do not yet exist. As each subsystem layer proves stable, the test scope expands upward. Real components handling real data from the start means data-handling errors surface early. The tradeoff is that high-level workflows remain untestable until upper modules are in place, and program managers see no working system until late in the cycle.

Big Bang Testing

All modules are combined at once and tested as a complete system. No stubs or drivers are needed, but when a failure occurs, figuring out which interface caused it takes significant investigation. In regulated environments, big bang testing is hard to justify when auditors expect documented verification at each integration step.

Incremental Integration Testing

Incremental integration combines top-down and bottom-up testing at the same time. The system is divided into layers, with integration proceeding from both ends toward the middle. This approach offers a strong traceability chain for regulated programs but requires careful orchestration at each increment.

How To Run a System Integration Test

Running SIT in a regulated environment requires documented entry criteria, traceable test design, and auditable verification and validation records.

1. Define the Integration Scope

Identify which subsystems and external interfaces are in scope and document what is excluded. Assign the applicable safety or integrity level for your domain, whether that is a Design Assurance Level (DAL) for avionics, a software safety class for medical devices, or an Automotive Safety Integrity Level (ASIL) for automotive. This classification sets the rigor for every subsequent step.

2. Design Test Cases Around Real Data Flows

Test cases should target the interfaces documented in your interface control documents and external system specifications. Writing clear requirements upfront makes test case design significantly easier. Include both valid and invalid inputs, and maintain traceability between test data sets and the interface conditions they exercise. For safety-critical programs, you may also need controlled fault scenarios to validate behavior under failure conditions.

3. Execute Tests, Log Defects, and Retest

Run tests in the sequence defined by your integration strategy, recording actual results against expected results at each step. When a test case fails, the defect record should include:

- Requirement references: Which test case and requirement are affected.

- Severity classification: Linked to risk management and safety impact where applicable.

- Behavior description: What actually happened versus what was expected.

After fixing a defect, repeat the failed tests and run regression tests on previously passing cases that the fix may have affected.

Common Challenges in SIT

Most SIT challenges trace back to gaps established earlier in the development lifecycle.

Interface Gaps That Surface Late

Interface requirements often receive less attention than functional requirements during authoring. When SIT begins, teams discover that interfaces between independently developed systems were assumed, not formally documented. Performance thresholds, error-handling behavior at system boundaries, and data format interpretations may differ between two systems implementing the same specification. You can avoid this by linking interface requirements to downstream test cases during authoring, so missing coverage becomes visible early.

Environment Mismatches

Test results from a non-representative environment cannot serve as valid evidence of system conformance. Whether the test environment matches the production configuration is a question auditors will ask. Legacy architecture and configuration differences between test and production can make this harder to control, but getting a realistic test environment in place early saves significant rework later.

Test Data Dependencies Across Systems

When System A passes a transaction to System B, the test data in System B must be consistent with System A’s output. Ad-hoc test data management creates risks, especially in regulated environments where data governance is expected.

How To Improve SIT Outcomes

Trace Every Test Case to a Source Requirement

Bidirectional traceability serves two functions. Forward traceability, from requirement to test case, confirms every requirement has been verified. Backward traceability, from test case to requirement, confirms every test case has a legitimate basis. Building this traceability during requirements authoring is more reliable than trying to reconstruct it later.

Automate on Stable Interfaces

Full automation of all integration test cases is not the industry norm, and volatile interfaces produce brittle automated tests that require constant maintenance. Risk-based automation targeting stable, high-criticality interfaces gives the best return for regulated programs. Focus automation efforts on the interfaces that carry the highest safety impact and change the least frequently.

Bring Reviewers Into Test Planning Early

In regulated industries, the signed test plan is an audit artifact that demonstrates agreement on acceptance criteria. Getting cross-functional reviewers involved before execution begins means those criteria get validated while there is still time to adjust scope. Teams that treat the test plan sign-off as a formality often discover misaligned expectations during execution, when changes are more expensive.

Where SIT Fits in Your Verification Strategy

Most SIT failures in regulated programs do not start in the test lab. They start with requirements that were too vague to test against and interfaces that were never formally specified. Building traceability between requirements and test cases at the point of authoring catches those gaps early. In regulated programs, that same traceability converts SIT results into the documented verification evidence that auditors expect.

Jama Connect® is a requirements management and traceability platform built for regulated industries. It keeps requirements, reviews, and test cases connected so coverage gaps surface before an auditor or a failed test finds them. Start a free 30-day trial to see how it supports SIT workflows.

Frequently Asked Questions About System Integration Testing

What is the difference between SIT and UAT?

SIT verifies that inter-system interfaces conform to technical specifications like interface control documents. UAT validates that the integrated product meets user and business needs. Test engineers perform SIT against technical specifications, while intended users or their representatives perform UAT against business processes.

When should system integration testing occur?

SIT occurs after system testing as the fourth of five ISTQB v4.0 test levels. Test planning should begin during architecture design on the left side of the V-model, while test execution happens on the right side after the system has been internally verified.

What tools are commonly used for SIT?

Regulated SIT workflows typically span requirements management platforms, analysis tools for code-level compliance, and defect tracking tools. The most important capability across all of these is bidirectional traceability from requirements through test cases to test results.

How does requirements traceability improve SIT outcomes?

Forward traceability confirms every requirement has a test case verifying it. Backward traceability confirms every test case has a legitimate basis. When a requirement changes, linked test cases identify which tests need re-execution. Without this, coverage gaps stay hidden until an auditor finds untested requirements or a defect reaches production.

Book a Demo

See Jama Connect in Action!

Our Jama Connect experts are ready to guide you through a personalized demo, answer your questions, and show you how Jama Connect can help you identify risks, improve cross-team collaboration, and drive faster time to market.