What Is Research Use Only (RUO)? Definition and FDA Rules

The research use only (RUO) designation gives diagnostics teams a valuable window during early development. It lets a product support assay development and biomarker discovery before the full set of in vitro diagnostic (IVD) obligations kick in. That window is exactly where teams generate the performance data that later carries a submission.

The catch is how narrow the exemption actually is, and how much compliance work still runs in the background. This guide covers what the RUO exemption under 21 CFR 809.10 allows, how the US and EU treat it, and what it takes to move an RUO product into a cleared IVD without losing traceability.

What Is Research Use Only (RUO)?

Research use only (RUO) is a narrow FDA exemption that lets an in vitro diagnostic product sit outside most IVD obligations while it is genuinely still in research. 21 CFR 809.10, the FDA rule that governs IVD labeling, sets three conditions that all have to hold at the same time. The product must be in the laboratory research phase, cannot be represented as an effective IVD, and must carry prominent labeling that reads “For Research Use Only. Not for use in diagnostic procedures.” Miss any one and the exemption falls away.

The designation covers instruments, reagents, software, and test systems used for early assay work, method development, and biomarker discovery. Minimum labeling still applies, so the carton needs the RUO statement, net quantity, manufacturer identity, and a lot number that ties back to manufacturing history. Premarket review, quality system requirements, and post-market surveillance all sit outside the RUO envelope.

How the RUO Regulatory Framework Works in the US and EU

FDA and EU regulators both judge RUO status by intended use, and the carton is only one piece of evidence. Both frameworks reconstruct intent from the full set of manufacturer communications and commercial conduct.

How the FDA Evaluates Objective Intent

FDA applies an objective-intent standard when deciding whether an RUO claim holds. Investigators weigh labeling, advertising, website copy, technical support content, sales records, and customer lists together. During an inspection, they pull website archives, purchase orders, shipping records, and support tickets, then compare the commercial pattern to the research-phase claim on the carton.

An RUO statement by itself protects nothing once the record points to clinical use. Sales concentrated in clinical analysis companies, marketing copy that names diagnostic applications, and support staff walking customers through clinical workflows all count as evidence of intent. If the evidence contradicts the label, FDA can treat the product as misbranded under section 502 of the FD&C Act and adulterated under section 501.

How EU IVDR 2017/746 Treats RUO Products

EU IVDR 2017/746, the EU regulation on in vitro diagnostic medical devices, applies to products intended for a medical purpose. Article 1(3)(a) takes products genuinely intended for research, product development, or performance evaluation out of scope, provided they are not placed on the market as IVDs. A product that stays inside that scope exclusion does not need CE marking under IVDR.

Medical Device Coordination Group (MDCG) guidance tightens the same idea. An RUO product cannot be promoted, supported, or sold in a way that implies diagnostic use. Drifting into clinical promotion pulls the product back inside IVDR and turns it into an IVD without CE marking, which creates the same exposure as a mislabeled product in the US.

What the Exemption Does Not Cover

The RUO relief covers premarket review, 21 CFR Part 820 quality system obligations, and post-market surveillance. It stops at the point where distribution contradicts the label or the product moves into clinical diagnosis. In the EU, an RUO product outside IVDR scope can still fall under product safety, chemical, and biosafety rules, so the scope exclusion is not a blanket pass on regulation.

How RUO Products Differ From Cleared IVDs

The gap between an RUO product and a cleared IVD is wider than it looks from a labeling perspective. The table below shows where the two sit across the dimensions that matter most during transition planning.

| Dimension | RUO Product | Cleared IVD |

| Intended use | Laboratory research phase only, no clinical diagnosis or patient management | Clinical diagnosis and patient management within the cleared intended use |

| Labeling | RUO statement plus minimum identity and lot information | Full labeling per 21 CFR 809.10(a) and (b), including intended use, performance, and warnings |

| Premarket review | Not required | Required via 510(k), De Novo, or PMA |

| Quality management system | Not required under 21 CFR Part 820 | Required, including design controls across the product lifecycle |

| Clinical claims | None permitted | Permitted within the cleared scope |

| Post-market obligations | Outside MDR, correction, and removal obligations | Medical Device Reporting (MDR), corrections and removals, and surveillance apply |

Technical support is where the line tends to blur. Generic instrument maintenance and software patches stay inside the research frame. Helping a customer validate a clinical workflow or walking staff through clinical result calls looks to FDA like diagnostic intent.

Where RUO Products Fit in Diagnostic Development

RUO products earn their place in four settings where a clinical claim would be premature and the work is still about characterizing performance.

- Early assay development: Teams tune instrument settings, reagent combinations, and software parameters before locking a design input. The RUO label fits because there is nothing yet to validate against, and pretending otherwise would create a misbranding problem.

- Biomarker discovery and translational research: Exploratory work generates hypotheses and results that never feed patient decisions. RUO reagents fit that scope and keep the work outside premarket review until a specific indication emerges.

- LDT component supply: Reference laboratories use RUO components inside laboratory-developed tests they validate themselves under Clinical Laboratory

- Improvement Amendments (CLIA) oversight. The laboratory carries regulatory responsibility for the finished test, so the RUO label correctly reflects the component maker’s scope.

- Analytical method development: Method development teams pick antibody pairs, characterize interference, and probe matrix effects before formal analytical validation begins. RUO tools carry the right claim here because the performance specifications are still being written.

Using RUO deliberately in these four settings gives diagnostics teams clean, defendable data for the point when the same product carries a clinical claim. A well-structured medical device requirements practice during this phase makes the eventual submission easier.

What Triggers FDA Enforcement

FDA acts on RUO products when intent points to clinical use in spite of the label. Two recent warning letters show the evidence pattern investigators build.

The Agena warning letter in March 2024 covered the iPLEX HS Colon Panel. FDA found sales into CLIA-certified labs doing patient testing and dual distribution confirmed in the company’s own records, and concluded that the RUO disclaimer was inconsistent with the commercial pattern.

The DRG warning letter in March 2025 covered the Salivary Cortisol ELISA RUO and related devices. FDA cited website copy describing clinical applications like diagnosis of systemic conditions and therapeutic drug monitoring, along with shipments to clinical analysis companies. In both cases the RUO statement sat where it was supposed to, and the rest of the record carried the product across the line. Consequences can include seizure, injunction, civil money penalties, and pressure to pursue a 510(k) or PMA before further distribution.

How to Transition an RUO Product Into an IVD

A product needs to move out of RUO status when its intended use shifts into clinical diagnosis, or when the way it sells starts to imply that shift. A last-minute relabeling push usually stalls while reviewers ask for records that were never captured under design controls. The work runs best as a structured program with three connected moves.

Start Design Controls Before Investigational Work

Open design controls under ISO 13485:2016 right after feasibility and before any investigational studies begin. Starting the Design History File early captures inputs, outputs, verification, validation, and reviews in real time, with authors and dates attached. Back-filling a DHF close to submission is the pattern auditors spot fastest, and a record reconstructed from memory always reads differently from one built as the work happened.

Time the QMSR Transition Correctly

The FDA Quality Management System Regulation (QMSR) took effect on February 2, 2026, and incorporates ISO 13485:2016 by reference. Build to QMSR from the start of transition so you avoid a second round of documentation work later and stay compatible with EU markets at the same time. Teams already certified to ISO 13485:2016 still need to address FDA-specific additions like Unique Device Identification and Medical Device Reporting.

Pick the Submission Pathway That Fits the Device

Classification decides the path. Most Class II IVDs go through 510(k) on the strength of a cleared predicate, novel Class II devices without one use De Novo, and Class III IVDs require PMA with full analytical and clinical validation data. Analytical studies built to recognized Clinical and Laboratory Standards Institute (CLSI) documents give reviewers a design they already know how to evaluate.

With the program in place, the harder question is whether the underlying records can actually support the submission.

Why Traceability Breaks Down During Transition

Research-phase documentation usually lives in spreadsheets, lab notebooks, and file shares, with design decisions scattered across authors and versions. That setup holds until a regulated submission asks for a single, current, reviewable chain from user need through verification and validation evidence. An auditor who pulls a random design input expects to walk straight to the linked verification output, and any gap becomes a finding.

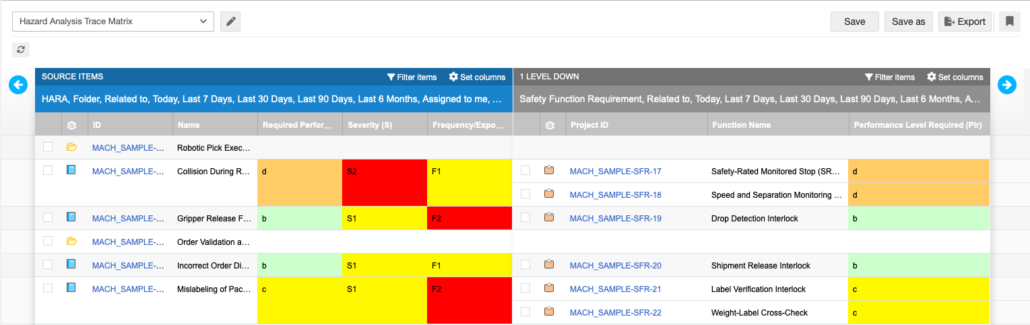

A requirements traceability matrix links design inputs to design outputs, verification, and validation in one view, and risk outputs from ISO 14971 hazard analysis feed the same chain through inputs and change management. Teams managing this in disconnected files carry a compounding maintenance burden, since every design change forces manual updates across many documents, and a single missed link can surface as an audit finding months later.

How Jama Connect Supports RUO-to-IVD Transition

Most breakdowns during RUO-to-IVD transition trace back to records that held up for research-phase work but cannot stand up to a design review. When design inputs, risk items, verification records, and change history live in separate systems, the chain a reviewer expects gets stitched together manually, and design transfer slows because production specs cannot be cleanly tied back to the validated design.

Jama Connect® is a requirements management platform for regulated product development, with pre-built frameworks for ISO 13485, FDA QMSR, EU IVDR, ISO 14971, and IEC 62304. Those frameworks keep design, risk, and verification records connected as an RUO program matures into a cleared IVD. When a requirement changes, suspect links alert the downstream owners who need to review verification evidence and risk management outputs.

Treating RUO as a Structured Phase of a Regulated Program

RUO pays off most for teams that treat it as a structured phase of a longer program. The research-phase work generates the data that will carry a submission, and the quality of that record depends on whether requirements, risk, and verification were connected from the start. Recent enforcement makes a record reconstructed under audit pressure much more expensive than it used to be.

A single system for requirements, risk, testing, and regulatory records gives diagnostics teams a way to see an RUO program as it matures. Jama Connect supports that workflow with its medical device and IVD frameworks, keeping traceability intact as a product moves toward a cleared IVD. Start a free trial of Jama Connect today to see how it keeps the record current.

Frequently Asked Questions About Research Use Only

Can an RUO product be sold to clinical laboratories?

Selling to clinical labs is not automatically a problem. It becomes one when the surrounding conduct points to clinical use, through promotional material, clinical support, or a customer book concentrated in clinical analysis companies. The Agena and DRG warning letters turned on exactly that combination of evidence.

When does an RUO product need to transition to an IVD?

Once commercial behavior, marketing copy, or support practice implies clinical use, the product is on the wrong side of the RUO line whether or not the carton has been updated. Building design controls, risk records, and traceability during the RUO phase makes the conversion to a regulated program much less painful than rebuilding the record later.

How does the QMSR affect RUO-to-IVD transition plans?

QMSR replaces most of 21 CFR Part 820 and incorporates ISO 13485:2016 by reference, with an effective date of February 2, 2026. A transition plan built to

QMSR from the start carries both FDA and ISO 13485 expectations at once. Teams already certified to ISO 13485:2016 mostly need to address FDA-specific adds like Unique Device Identification and Medical Device Reporting.

What is the difference between RUO status in the US and EU?

Both frameworks judge intended use through the full body of a manufacturer’s communications, so label text is never the only input. FDA applies an objective-intent standard across labeling, advertising, support content, and customer records, while EU IVDR 2017/746 uses an Article 1(3)(a) scope exclusion for products genuinely intended for research. Promoting for clinical use creates enforcement exposure in both jurisdictions even when the carton still reads RUO.